An Analytics Marketplace for SMEs

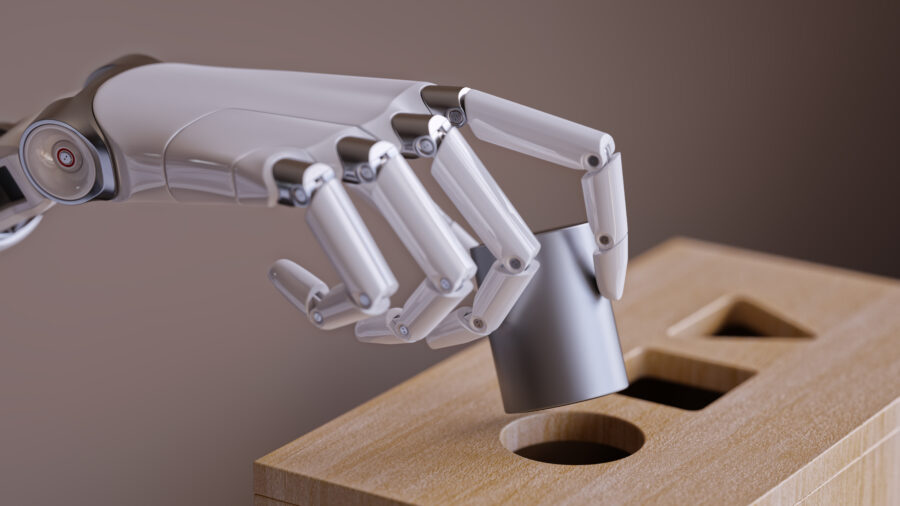

Through ever new innovations, the economically leading industries, their respective market leaders and their business models have continuously changed over time. The use of software in value creation and communication via the Internet have shaped business models and have not only become standard since the Corona pandemic. In 2021, technology companies are by far among the most valuable publicly traded companies by market capitalization – the six most valuable companies listed on the U.S. S&P 500 stock index are all technology companies (Figure 1). The services and business models of these digital companies, such as Google and Facebook, are based on storing and processing user data. From the immense amounts of data generated during the use of the services, insights about the users and their behavior can be generated and profitably marketed by means of machine learning (ML). The popularity of so-called BigTech services means that users are virtually forced to hand over their data, with providers issuing more and more new terms and conditions over time. Due to the lock-in effects of GAFA services (Google, Amazon, Facebook and Apple) in both private and business contexts, customers usually see no alternative to giving consent. The GAFA companies thus have access to user data which is not available to other market participants and thus in turn must be acquired as services from the large digital companies. In the long term, this spiral of involuntariness will result in an oligopolization of the data market with striking consequences for overall economic competition. Not only small and medium-sized enterprises, but also large corporations are now dependent on the digital capabilities of GAFA, as shown, for example, by BMW’s strategic cooperation with Amazon Web Services. The car manufacturer obtains the analytical capabilities of the Amazon cloud, but at the same time outsources forward-looking, intelligent services such as a new voice processing solution for its fleet (BMW Group, 2020). For small and medium-sized enterprises (SMEs), AI offers enormous potential to scale and increase efficiency, but there is no alternative to using GAFA services, especially for these companies, as they themselves lack machine learning knowledge, for example. In addition, without the mostly cloud-based GAFA services, SMEs would often not be able to afford to build their own intelligent services due to the high development costs. One argument against outsourcing intelligent services in this way, however, is that SMEs are handing digital innovations over to GAFA, as knowledge is in turn flowing away to them. The consequences of this trend are difficult to assess, but data represents the core of the problem – those who have access to the largest possible data treasures can create innovative and intelligent services and at the same time build forward-looking AI capabilities.

Current Challenges in the Use of Machine Learning for SMEs

If a company wants to solve a business problem using machine learning, it needs three elementary resources: relevant internal and external data, machine learning expertise (ML experts) and computing power. The lack of ML expertise is a major problem, especially for small and medium-sized enterprises (SMEs), which often do not have the financial resources to hire experienced ML experts. In small companies, the initiative and responsibility for ML projects usually lie with a single person who has data science experience, domain knowledge, and IT expertise. In medium-sized companies, several people may be involved, but there is a lack of frameworks for collaborative work and standardized processes for implementing an ML project. In both cases, the lack of expertise often means that identified use cases do not survive the concept or pilot phase. In addition, data availability and quality are major issues: In some cases, the amount of available information is not sufficient to adequately train the ML models. In addition, the data sets are insufficiently standardized, which in turn increases the costs for data preprocessing and thus reduces the financial attractiveness of the projects. If the amount of data is sufficient to implement a use case, SMEs are increasingly entering into partnerships with external partners to buy in AI expertise. While small companies prefer domain-specific software providers, medium-sized companies also conduct research projects with universities to cooperatively build knowledge around machine learning. Overall, it can be deduced that company size and machine learning maturity are strongly correlated – the larger the company, the stronger the capabilities related to the technology. SMEs do have flat hierarchies and management is supportive of employee ML projects, but lack the data and skills to implement AI projects. (Bauer et al., 2020).

A Data Analytics Marketplace for SMEs

(1) Idea

An analytics marketplace could solve both the lack of ML expertise and the lack of data. If all the resources required for machine learning projects could be made available via a platform with marketplace functionalities, different tasks could be analyzed using suitable ML models. The data required for training the AI models could be made available to all interested parties on a decentralized and democratically managed basis, e.g., using distributed ledger technology. AI models and the training of these models by experts could also be offered via such a marketplace. This would break up the dominance of the BigTechs and a decentralized value chain for AI projects could be established. Although such a marketplace could be implemented as a central platform (like AirBnB and other sharing platforms), the platform operator would also develop a certain position of power vis-à-vis suppliers and consumers if there were a sufficiently large number of participants. In an analytics marketplace, an operator with such a position of power would be a hindrance, because the participating companies would not want to place sensitive internal data and the insights derived from it in the hands of third parties as factors that increase competition.

(2) Concept and Roles

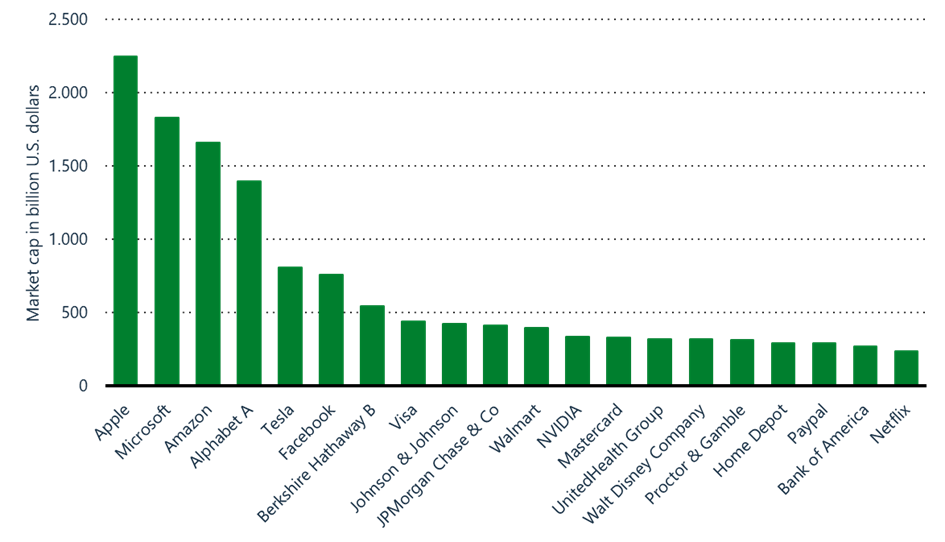

Distributed ledger technology (DLT) offers a possible solution for the idea of the analytics marketplace, because this infrastructure allows information to be distributed anonymously between the parties. In addition, market operations can be decentralized using predefined logics in the form of smart contracts. This makes it possible, for example, to automatically determine whether the parties interested in cooperation meet the requirements for a successful partnership, e.g. with regard to data and model quality as well as competence for processing requested tasks. The marketplace also enables not only the implementation of use cases for individual companies, but also the sharing of insights or trained models for specific use cases. Lanquillon and Schacht (2019) propose the following six roles and respective tasks for such a marketplace:

- Task creators define the analysis task to be processed and formulate business and technical requirements for the solution as well as criteria for evaluating the solution.

- Model builders either provide ML models that are suitable and pre-trained for the task or train new models on the basis of suitable data sets that are made available on the marketplace.

- Data providers provide data from various sources, here the focus is on compliance with data protection and ensuring data security. When data is provided for model creation, it must be ensured that the model creator cannot gain knowledge of its origin or even steal the data. To ensure such professionalism, the roles of data owner and data provider should not necessarily coincide, so that at best the data is aggregated across multiple companies and certain data sets can no longer be attributed to the companies that provided them.

- IT infrastructure providers provide storage space, e.g., to integrate different data sources or to store data sets unchangeably. They also provide computing power for model generation as needed. IT infrastructure providers can be organizations such as cloud providers as well as private individuals with unused storage space or computing capacity.

- Model validators evaluate the compliance of the created ML model with the business requirements, such as model quality.

- Model users do not necessarily have to be only the task creators, because the models can be made available both publicly and bilaterally. The models can then be offered directly as libraries or integrated indirectly into software and products, e.g., via suitable interfaces as a model as a service.

(3) Key Design Aspects

In order to enable data exchange between different parties via a distributed platform and make machine learning functionalities usable, three key aspects must first be addressed (Lanquillon and Schacht, 2019):

- Trust between parties that are unknown to each other is established via the blockchain. The technology ensures that transactions are carried out on a consensus basis and that, for example, data transfers and other facts are documented transparently and comprehensibly.

- An incentive system significantly influences the interactions and thus the success of the marketplace. By using DLT-based smart contracts, for example, micro payments can be triggered automatically after tasks have been completed; cryptocurrencies can be used here, as these are also based on blockchains. In order to guarantee the honesty of the marketplace participants, model builders, data providers, IT infrastructure providers as well as model validators would have to deposit a stake money, which is used to compensate the task creators and/or model users in case of fraud or poor performance.

- Data protection is of particular relevance due to the exchange of data and models between parties that are not known to each other. Technically, encrypted sandbox model containers are suitable for model creation, validation, and application, so that access and processing rights between the parties are separated in such a way that none of the parties can attribute or misappropriate the input data of the models and results (Unit 42, 2019)[1].

Critical Reflection

SMEs often lack access to external, domain-relevant information and the expertise to generate meaningful insights using machine learning. As a result, many ML projects fail in the planning phase and the potential of data and AI expertise that may be distributed across multiple SMEs cannot be exploited. An analytics marketplace is one possible solution, but it involves technical and organizational challenges. For example, although DLT can establish transparency, traceability, and thus trust in the marketplace, this infrastructure is slower to process data than centralized databases and more expensive to operate, making it only partially suitable for processing larger volumes of data. Challenges on the machine learning side exist in terms of computationally intensive data processing as well as data security, as all AI models are trained “off-chain”, i.e. outside of DLT. Encapsulated, secure environments based on the sandbox principle represent a possible solution. Even if such a data analytics marketplace will not break GAFA’s market power any time soon, it can still serve as a motivation for SMEs to develop innovative and intelligent services as well as build up AI expertise.

[1] If you are interested, you can read more about sandbox containers here.

Sources

AssetDash. (February 4, 2021). Market capitalization of largest companies in S&P 500 Index as of February 4, 2021 (in billion U.S. dollars) [Graph]. In Statista. Retrieved March 16, 2021, from https://www.statista.com/statistics/1181188/sandp500-largest-companies-market-cap/

Bauer, M., van Dinther, C., & Kiefer, D. (2020). Machine Learning in SME: An Empirical Study on Enablers and Success Factors. Retrieved March 22, 2021, from https://www.researchgate.net/publication/344651203_Machine_Learning_in_SME_An_Empirical_Study_on_Enablers_and_Success_Factors

BMW Group (2020). AWS and BMW Group Team Up to Accelerate Data-Driven Innovation. URL: https://www.press.bmwgroup.com/global/article/detail/T0322118EN/aws-and-bmw-group-team-up-to-accelerate-data-driven-innovation?language=en

Lanquillon, C. & Schacht, S. (2019). Der Analytics-Marktplatz. In S. Schacht & C. Lanquillon (Hrsg.) Blockchain und maschinelles Lernen. Wie das maschinelle Lernen und die Distributed-Ledger-Technologie voneinander profitieren. Springer Vieweg.